Generating insights from raw data instead of structured and contextualized data has been a challenge for existing AI algorithms and models. But with machine learning algorithms, sensor data can be analyzed in real time to empower humans with untapped information.

As industrials know, monitoring and constantly improving the quality of products over time is a challenging task, especially when a low-cost, high-volume strategy isn’t viable, especially with social, environmental, energy or unprocessed material constraints. Brands are built on the quality of their products, and their reputation depends on it.

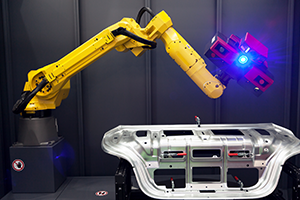

Usually, quality is estimated by humans visually confirming issues, near the end of the production process. This is time-consuming, prone to error, and it is generally too late to improve the current batch if needed. Could we accelerate and automate this process, where humans are transforming real world information (such as the presence of a scratch on the surface) into labelled, usable data (such as: batch 834/quality default/scratch/type of scratch/estimated value, …)?

Automated QA systems are incomplete without AI

Industrials already leverage visual recognition to improve the process quality, but the programs, with rules set by hand by programmers, can only tackle a small number of well-defined problems. Exploiting raw, unprocessed big data from the real world with neural networks algorithms capable of learning by themselves would give companies an enormous competitive advantage with predictive quality (‘what the final quality will be’) and prescriptive quality (‘you should use these settings to achieve your quality objectives’). This would make use of photos, videos, heat distribution, sound (such as vibrations oscillations) and other electromagnetic waves data.

“A picture condenses a large amount of information”, analyzes José Andrés García, Wizata’s Chief Operation Officer. “If you had to know when to replace a cylinder on a conveyor belt, an efficient visual analysis could tell you precisely when the time is right, instead of having to track every second for two years the effort that was put on the specific equipment, the tension over time, the covered distance, etc…” Similarly, opening an industrial gearbox and inspecting it while the whole production line is stopped is grossly inefficient compared to an AI analysis of an audio recording of the equipment, which could be decomposed to detect and track specific issues in the equipment without opening it (such as a specific ball bearing failing that will need replacement in less than two weeks).

AI makes sense of the real state of production by analyzing every bit of unbiased, raw data

Working with raw data also reduces the risks of using a biased dataset. “Depending on the use case, we may connect to the raw data from the sensors and analyze it live, because information can be lost, altered or compressed before storage. We could even get better results working with a dataset of 1 year of raw data instead of 10 years of stored, aggregated data.”

Autonomous operations

Automatic analysis of raw data is a requirement for autonomous operations, where humans are either too slow to react to avoid an incident, or where humans can’t operate machines from close by due to electromagnetic interferences and radiations, such as around Fukushima Daiichi. “Sensors can feel what we don’t feel and go where we can’t go, for example recording heat inside an oven”, adds Franck Bettinger, Senior Data Scientist at Wizata. “Taking advantage of computer programs to see and analyze parts of sensory data that are inaccessible to us, such as X-rays or infrared, helps in making an objective judgement on the quality of a batch. Perceiving a gradual color change on 2km of coils with your own eyes is next to impossible: your brain would automatically compensate any change.”

Do you want to start harnessing the power of your production data? Check out our ebooks with expert advice from our data scientists and data engineers, or contact us to book your exploratory workshop.